Making RHOAI GPU-as-a-Service Work for You

Building a Scalable GPU-as-a-Service Platform

Transform static infrastructure into a dynamic, governed AI service.

In enterprise AI environments, the primary infrastructure challenge is maximizing the utilization of high-cost GPU accelerators while ensuring equitable access for diverse workloads. Static 1:1 allocation models—where a single developer locks an entire physical GPU for lightweight tasks—result in resource contention, idle capacity, and inflated infrastructure costs.

This course details the architecture and configuration required to deploy GPU-as-a-Service using Red Hat OpenShift AI. You will move beyond manual pod pinning to build an automated allocation engine that optimizes hardware density and enforces usage quotas at scale.

The Core Objective: Optimization at Scale

The goal of this deployment is to shift GPU management from "ticket-based provisioning" to "self-service governance." By implementing this architecture, you deliver three key outcomes:

-

Maximize Utilization (Density): Partition physical GPUs (e.g., NVIDIA L40S or A100) into smaller, functional units to support 4x–7x more concurrent users on existing hardware.

-

Enforce Fairness (Governance): Implement a global queuing system that manages priority and preemption, preventing resource hoarding by single tenants.

-

Streamline Consumption (Velocity): Abstract complex node topology (Taints, NVLink requirements) into standardized "Hardware Profiles" that simplify user access.

The Solution Architecture

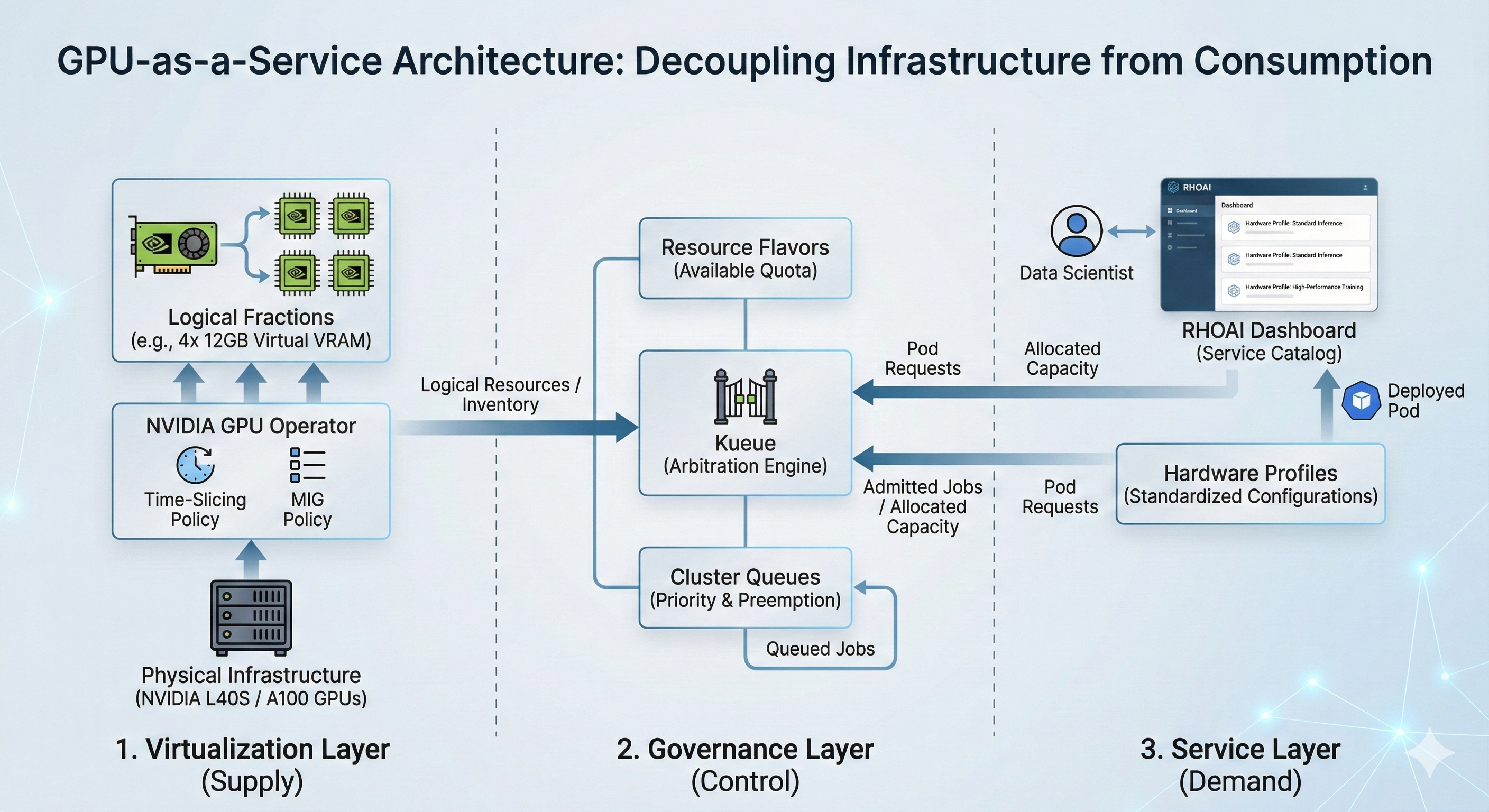

You will engineer a supply chain that decouples Physical Supply from Logical Demand.

1. The Virtualization Layer (Supply)

-

The Asset: A configured NVIDIA GPU Operator with Time-Slicing or MIG policies.

-

The Function: Breaks monolithic hardware into usable fractions.

-

The Example: Instead of dedicating a full NVIDIA L40S (48GB) to a single Jupyter notebook, you apply a Time-Slicing Policy to create 4 logical replicas (12GB virtual VRAM each), quadrupling the serving capacity for inference workloads.

2. The Governance Layer (Control)

-

The Asset: Kueue Resource Flavors and Cluster Queues.

-

The Function: Acts as the arbitration engine. It intercepts pod requests, evaluates available quota, and queues lower-priority jobs during peak contention.

-

The Outcome: Guaranteed access for critical training jobs while backfilling idle cycles with lower-priority development work.

3. The Service Layer (Demand)

-

The Asset: Hardware Profile Custom Resources (CRs).

-

The Function: The user-facing catalog entry. It maps a business requirement (e.g., "Standard Inference") to the underlying infrastructure labels and queue policies.

-

The Outcome: Data scientists deploy standard configurations without needing to understand Kubernetes taints, tolerations, or resource limits.

Deployment Scope

In this course, you will act as the Platform Engineer responsible for industrializing the GPU fleet. You will execute the following infrastructure tasks:

-

Define the Partitioning Strategy: Configure the GPU Operator to enable Time-Slicing on NVIDIA L40S nodes to support high-density concurrent users.

-

Implement Quota Management: Deploy Kueue to manage fair-share scheduling across multiple project namespaces.

-

Publish the Service Catalog: Create and validate Hardware Profiles that expose these managed resources to the OpenShift AI dashboard.

|

Technical Prerequisites

To execute the deployment steps in this guide, ensure your environment meets the following criteria:

|

Proceed to the next section to begin configuring the virtualization layer.