Hardware Profile Tiering Strategy

Table of Contents

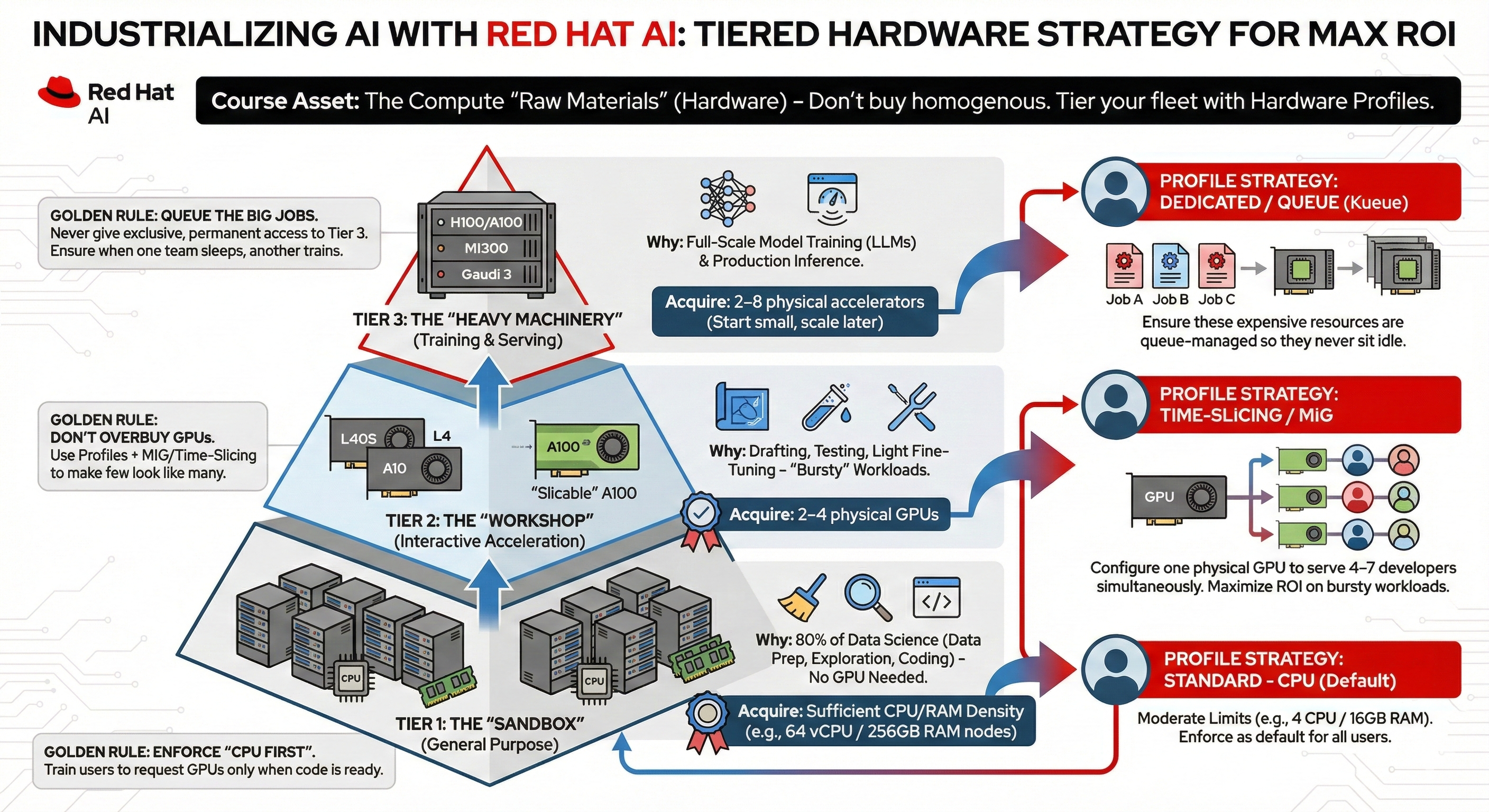

Overview

This document defines the standard HardwareProfile tiers for the OpenShift AI environment. These profiles use the v1 unified identifier schema to ensure compatibility with both the OpenShift AI Dashboard and resource management through Kueue.

Figure 1. Hardware profiles - A Tiered Approach to Allocation

All profiles must be applied to the redhat-ods-applications namespace to be visible in the RHOAI Dashboard.

|

Tier 1: General Purpose (CPU Only)

These profiles are for standard exploratory data science, documentation, and light development.

xSmall Profile

apiVersion: infrastructure.opendatahub.io/v1

kind: HardwareProfile

metadata:

name: tier1-xsmall

namespace: redhat-ods-applications

annotations:

opendatahub.io/display-name: "CPU: xSmall"

opendatahub.io/description: "Dev: 1-2 CPU, 2-4GiB RAM"

opendatahub.io/dashboard-feature-visibility: '["workbench"]'

spec:

identifiers:

- identifier: cpu

displayName: CPU

resourceType: CPU

defaultCount: 1

minCount: 1

maxCount: 2

- identifier: memory

displayName: Memory

resourceType: Memory

defaultCount: 2Gi

minCount: 2Gi

maxCount: 4GiMedium Profile

apiVersion: infrastructure.opendatahub.io/v1

kind: HardwareProfile

metadata:

name: tier1-medium

namespace: redhat-ods-applications

annotations:

opendatahub.io/display-name: "CPU: Medium"

opendatahub.io/description: "Standard: 4-8 CPU, 16-32GiB RAM"

opendatahub.io/dashboard-feature-visibility: '[]'

spec:

identifiers:

- identifier: cpu

displayName: CPU

resourceType: CPU

defaultCount: 4

minCount: 2

maxCount: 8

- identifier: memory

displayName: Memory

resourceType: Memory

defaultCount: 16Gi

minCount: 8Gi

maxCount: 32GiData Engineering & Prep

Designed for high-memory workloads such as heavy Pandas processing or Spark-based ETL.

apiVersion: infrastructure.opendatahub.io/v1

kind: HardwareProfile

metadata:

name: dataprep-heavy

namespace: redhat-ods-applications

annotations:

opendatahub.io/display-name: "DataPrep: High Memory"

opendatahub.io/description: "ETL Workloads: 8-16 CPU, 64-128GiB RAM"

opendatahub.io/dashboard-feature-visibility: '["workbench","pipelines"]'

spec:

identifiers:

- identifier: cpu

displayName: CPU

resourceType: CPU

defaultCount: 8

minCount: 4

maxCount: 16

- identifier: memory

displayName: Memory

resourceType: Memory

defaultCount: 64Gi

minCount: 32Gi

maxCount: 128GiTier 2: Accelerated Computing (NVIDIA GPU)

GPU profiles are tiered based on whether they are shared (sliced) or dedicated (isolated).

Shared GPU (Time-Slicing)

apiVersion: infrastructure.opendatahub.io/v1

kind: HardwareProfile

metadata:

name: nvidia-shared-v100

namespace: redhat-ods-applications

annotations:

opendatahub.io/display-name: "GPU: Shared (V100)"

opendatahub.io/description: "Shared GPU access via time-slicing."

opendatahub.io/dashboard-feature-visibility: '["workbench"]'

spec:

identifiers:

- identifier: nvidia.com/gpu

displayName: "Shared GPU"

resourceType: Accelerator

defaultCount: 1

minCount: 1

maxCount: 1

- identifier: cpu

displayName: CPU

resourceType: CPU

defaultCount: 8

minCount: 4

maxCount: 16

- identifier: memory

displayName: Memory

resourceType: Memory

defaultCount: 32Gi

minCount: 16Gi

maxCount: 64Gi

scheduling:

node:

nodeSelector:

nvidia.com/gpu.product: nvidia-shared-v100

tolerations:

- effect: NoSchedule

key: workload

operator: Equal

value: training

type: NodeIsolated Partition (MIG 1g.5gb)

apiVersion: infrastructure.opendatahub.io/v1

kind: HardwareProfile

metadata:

name: nvidia-mig-1g-5gb

namespace: redhat-ods-applications

annotations:

opendatahub.io/display-name: "GPU: MIG 1g.5gb"

opendatahub.io/description: "Hardware-isolated 5GB GPU slice."

opendatahub.io/dashboard-feature-visibility: '["workbench","model-serving"]'

spec:

identifiers:

- identifier: "nvidia.com/mig-1g.5gb"

displayName: "MIG Slice"

resourceType: Accelerator

defaultCount: 1

minCount: 1

maxCount: 1

- identifier: cpu

displayName: CPU

resourceType: CPU

defaultCount: 8

minCount: 4

maxCount: 16

- identifier: memory

displayName: Memory

resourceType: Memory

defaultCount: 32Gi

minCount: 16Gi

maxCount: 64Gi

scheduling:

node:

nodeSelector:

nvidia.com/gpu.product: nvidia-mig-sliced

tolerations:

- effect: NoSchedule

key: workload

operator: Equal

value: training

type: NodeTier 3: Training & Intensive Workloads

These profiles use strict node placement to ensure jobs run on optimized hardware without interference.

apiVersion: infrastructure.opendatahub.io/v1

kind: HardwareProfile

metadata:

name: training-dedicated-a100

namespace: redhat-ods-applications

annotations:

opendatahub.io/display-name: "Training: Dedicated A100"

opendatahub.io/description: "Full A100 access on isolated nodes."

opendatahub.io/dashboard-feature-visibility: '[]'

spec:

identifiers:

- identifier: "nvidia.com/gpu"

displayName: "A100 GPU"

resourceType: Accelerator

defaultCount: 1

minCount: 1

maxCount: 8

- identifier: cpu

displayName: CPU

resourceType: CPU

defaultCount: 16

minCount: 8

maxCount: 32

- identifier: memory

displayName: Memory

resourceType: Memory

defaultCount: 128Gi

minCount: 64Gi

maxCount: 256Gi

scheduling:

node:

nodeSelector:

node-role.kubernetes.io/training-node: "true"

nvidia.com/gpu.product: "A100-SXM4-80GB"

tolerations:

- key: "workload"

operator: "Equal"

value: "training"

effect: "NoSchedule"

- key: "nvidia.com/gpu"

operator: "Exists"

effect: "NoSchedule"

type: Node